Understanding Batch Effect and Normalization in scRNA-Seq Data

A practical guide to batch effect correction and normalization in scRNA-seq data, covering when integration is justified, leading tools including Harmony, Seurat, BBKNN, and scVI, evaluation strategies, and how to avoid overcorrection.

Understanding Batch Effect and Normalization in scRNA-Seq Data

Single-cell RNA sequencing, scRNA-seq, makes it possible to resolve heterogeneous tissues at cell-level resolution. But the signal you care about sits on top of a large amount of technical structure. Differences in tissue handling, dissociation chemistry, library preparation, sequencing depth, chemistry version, operator, center, and donor composition can all change the observed expression landscape. In practice, two problems get conflated here: normalization, which adjusts within-dataset technical differences between cells, and batch effect correction or integration, which aligns datasets across batches, donors, runs, or studies.

That distinction matters. Normalization is almost always required. Batch correction is not always appropriate, and when it is used, it should be driven by a clear biological question. If the goal is atlas construction, multi-donor comparison, or label transfer across studies, integration is often necessary. If the goal is to quantify condition-specific biology, aggressive integration can remove the very signal you want to measure.

This article explains what batch effects actually look like in scRNA-seq, where normalization fits into the pipeline, which correction methods are currently most useful, and how to evaluate whether integration helped or quietly damaged the biology.

Why batch effects are a serious problem in scRNA-seq

In bulk RNA-seq, batch effects usually distort sample-level profiles. In scRNA-seq, they can do more than shift expression values. They can reshape neighborhood graphs, split one cell state into several artificial clusters, or force biologically distinct populations to overlap. A dissociation protocol that preferentially stresses one sample, for example, may elevate mitochondrial and immediate early gene programs and produce a structure that looks biological but is mostly technical.

Common sources of batch effects in scRNA-seq include:

- different sample collection or preservation procedures

- differences in tissue dissociation and cell viability

- chemistry version or platform changes

- sequencing depth and ambient RNA contamination

- donor-to-donor variability when donor is not the biological variable of interest

- different cell composition across batches

The last point is where batch correction gets tricky. Some between-sample variation is technical. Some is real biology. Many integration failures happen when those two are not separated conceptually before analysis starts.

Start with experimental design, not software

The cleanest batch effect is the one you never introduce. Good study design still outperforms downstream rescue.

A few principles matter disproportionately:

- randomize samples across processing runs where possible

- balance conditions within batches instead of processing one condition per day

- keep dissociation, capture, and library preparation as consistent as possible

- include biological replicates, not just technical replicates

- record metadata that may later explain unwanted structure, including chemistry version, operator, run, lane, tissue handling time, and dissociation protocol

In multi-sample scRNA-seq, a confounded design cannot be fully repaired computationally. If all controls were processed in one run and all cases in another, the model has no reliable way to know whether the separation is technical or biological.

Normalization and batch correction are not the same step

This is the most important conceptual distinction in the workflow.

Normalization

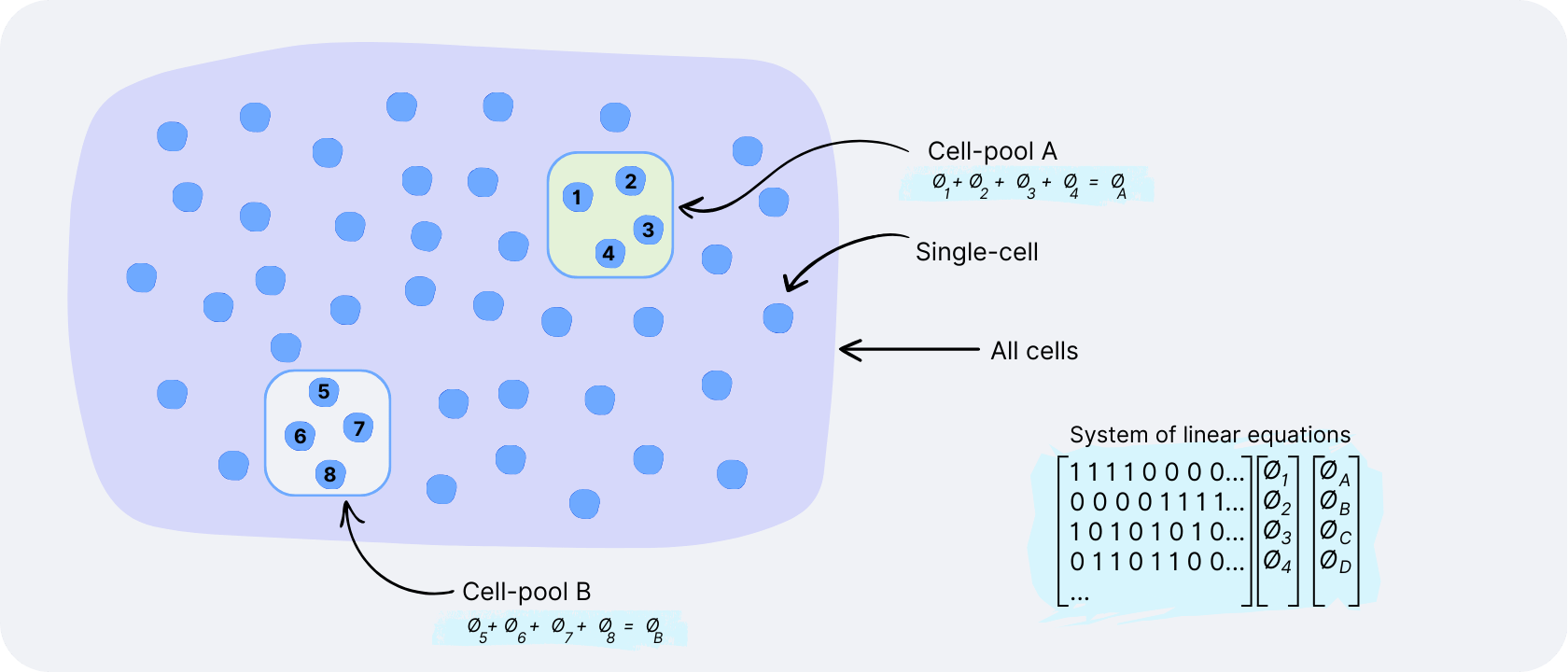

Normalization adjusts for cell-specific technical effects within a dataset, mainly differences in library size and capture efficiency. It makes cells more comparable before highly variable gene selection, dimensionality reduction, neighborhood graph construction, and clustering.

Typical outputs of normalization are log-normalized counts, size-factor normalized counts, or variance-stabilized residuals.

Batch correction or integration

Batch correction acts across datasets or batches. It tries to align shared cell populations while preserving true biological differences. In modern workflows, this usually happens in a latent space, PCA space, or graph structure, not by editing the raw count matrix itself.

That difference is why many analysts keep one object for visualization and integration, and another for tasks like differential expression that are better performed on uncorrected counts with batch modeled explicitly in the statistics.

What normalization should do in UMI-based scRNA-seq

For UMI-based data, normalization should account for differences in sequencing depth and compositional effects without flattening meaningful biology. It should also produce a representation that behaves well during PCA and neighborhood graph construction.

Without suitable normalization, you typically see one or more of the following:

- cells cluster by total UMI count rather than transcriptional identity

- rare populations disappear because low-depth cells lose weak markers

- highly expressed programs dominate principal components for technical reasons

- differential expression becomes unstable because one group systematically has different library size

Current normalization approaches that actually matter

The field has become more opinionated over time. A few methods now dominate real scRNA-seq workflows, while others survive mostly because they are historically familiar.

| Method | Best for | Trade-offs |

|---|---|---|

| Log normalization | Routine UMI datasets, exploratory clustering, interoperability across tools | Falls short with large RNA content variation across cell types; limited variance stabilization |

| SCTransform | Complex datasets with broad depth variation, Seurat-centered workflows | More computational overhead; over-regression can suppress biology |

| Scran deconvolution | Heterogeneous datasets, composition bias settings, Bioconductor workflows | Less common in beginner workflows; more code-intensive stack |

| CLR | Antibody-derived tag data in multimodal datasets such as CITE-seq | Not a general RNA normalization strategy for scRNA-seq gene expression |

| Quantile normalization | Historically used in microarray settings | Can erase biological differences between cells; not recommended for UMI scRNA-seq |

Log normalization

This remains the standard baseline in Seurat and Scanpy workflows. Counts are normalized by total counts per cell, scaled, then log-transformed.

Where it works well

- routine UMI datasets

- exploratory clustering

- smaller projects where simplicity matters

- workflows that need interoperability across many tools

Where it falls short

- datasets with large differences in RNA content across cell types

- cases where variance stabilization matters strongly

- workflows that need tighter control of technical covariates before PCA

Log normalization is still a valid default. It is not obsolete. It is just no longer the only serious option.

SCTransform

SCTransform models counts using regularized negative binomial regression and returns variance-stabilized values that often behave better for feature selection and dimensionality reduction. In Seurat v5, the updated sctransform v2 framework is the default SCT implementation. It is widely used when analysts want robust preprocessing and integration within the Seurat ecosystem.

Where it works well

- complex datasets with broad depth variation

- Seurat-centered workflows

- cases where PCA and clustering are sensitive to technical structure

Trade-offs

- more computational overhead than simple log normalization

- added modeling complexity

- users need to be deliberate about what is regressed out, because over-regression can suppress biology

Scran deconvolution size factors

Scran remains one of the most principled methods for estimating size factors in sparse single-cell count data. Its pooling-based deconvolution is particularly useful when simple library-size normalization is biased by strong composition differences.

Where it works well

- heterogeneous datasets

- settings where composition bias is likely

- Bioconductor-based workflows

Trade-offs

- less common in beginner workflows than Seurat defaults

- usually part of a more code-intensive analysis stack

CLR normalization for antibody tags, not RNA

Centered log ratio, CLR, is still relevant in multimodal datasets such as CITE-seq, but mainly for antibody-derived tag data. It is not a general RNA normalization strategy for standard scRNA-seq gene expression.

Methods you usually should not prioritize for UMI scRNA-seq

Quantile normalization is not a standard choice for modern UMI-based scRNA-seq. It was useful historically in microarray settings, but it imposes identical distributions in a way that can erase biologically meaningful differences between cells. In most single-cell RNA workflows, it is not the method you want to build around.

When batch correction is justified

You do not batch-correct because multiple samples exist. You batch-correct because the analysis requires alignment across samples and there is evidence that technical structure is dominating the embedding or neighborhood graph.

Batch correction is often justified when:

- the same cell type separates primarily by donor, run, chemistry, or site

- you are building a joint atlas across donors or studies

- you need cross-sample label transfer or integrated visualization

- you want to compare matched cell states across replicates after aligning shared populations

Batch correction is often not the first thing to do when:

- the biological variable of interest is expected to produce strong global shifts

- conditions differ so strongly that alignment would blur meaningful biology

- you are performing formal differential expression, where modeling batch in the design formula is often preferable to using corrected values

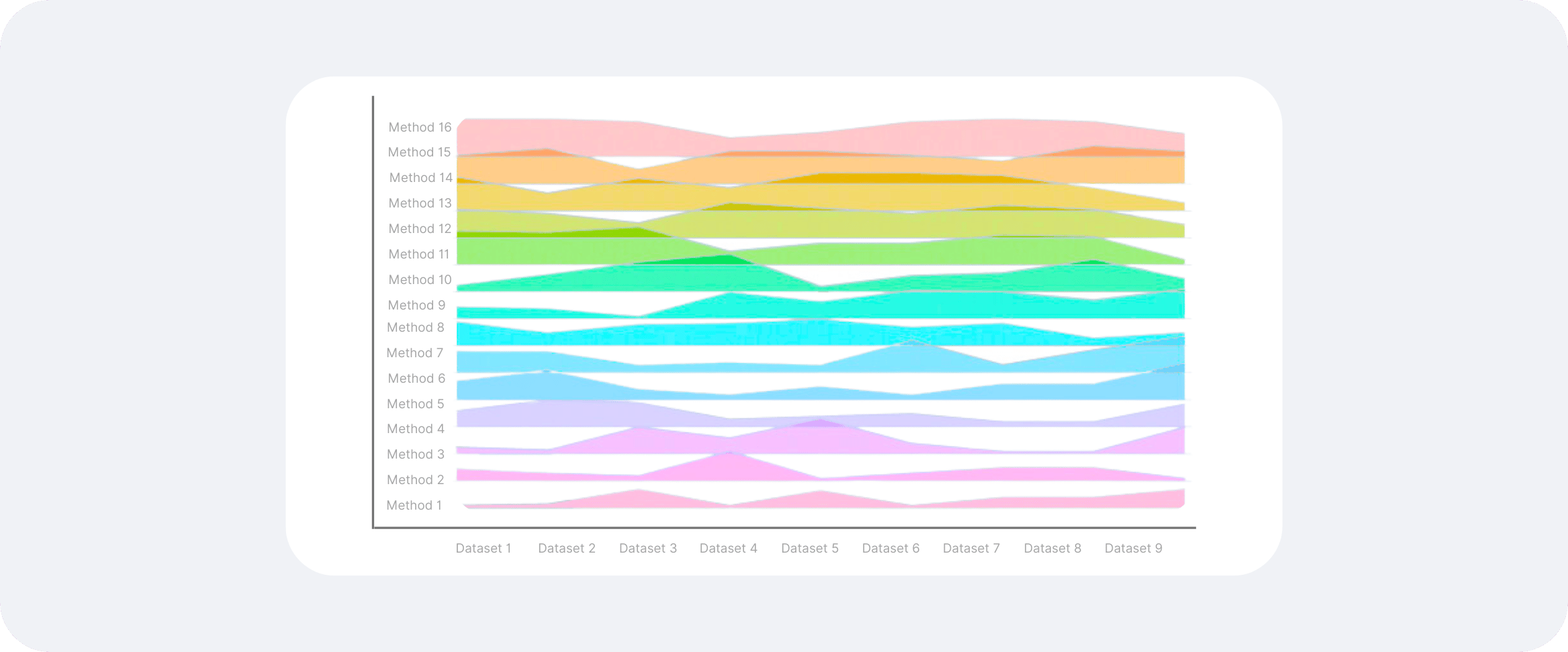

Leading tools for batch correction and integration

Below is the practical view, not just the catalog.

| Tool | Strengths | Limitations |

|---|---|---|

| Harmony | Fast and scalable; works well for donor or run effects; fits Seurat and embedding-based workflows | Depends on upstream PCA quality; can struggle with asymmetric batch composition; overcorrection risk |

| Seurat integration | Mature ecosystem; strong interoperability with clustering, visualization, and annotation; good biological performance | Memory-intensive at scale; requires method choice and parameter awareness; must separate integrated from count-level data |

| BBKNN | Very fast and lightweight; fits naturally into Scanpy workflows | Graph-level solution only; less flexible for complex nonlinear effects; sensitive to neighborhood settings |

| scVI / scANVI | Handles complex nonlinear batch structure; scales to large studies; strong for atlas building and reference mapping | Requires more technical familiarity; benefits from stronger compute infrastructure; still needs careful validation |

| Nygen Analytics | No-code workflows for normalization, visualization, clustering, and batch-aware analysis; removes routine coding overhead | Built on Scarf-based infrastructure; does not replace methodological judgment |

Harmony

Harmony operates in a low-dimensional embedding, typically PCA space, and iteratively removes batch-associated structure while trying to preserve shared biological organization.

Strengths

- fast and scalable

- works well for donor or run effects in many routine datasets

- easy to insert into existing Seurat or other embedding-based workflows

Limitations

- depends on the quality of the upstream PCA representation

- can struggle when batches have highly asymmetric cell composition

- should still be checked carefully for overcorrection

Seurat integration

Seurat's integration workflows, including anchor-based approaches and SCT-based integration, remain widely used for aligning batches, donors, and conditions. They are especially common in analyst teams already working in the Seurat ecosystem.

Strengths

- mature ecosystem

- strong interoperability with clustering, visualization, and annotation workflows

- good biological performance when parameters and reference strategy are chosen carefully

Limitations

- can be memory-intensive on larger studies

- requires method choice and parameter awareness

- users need to separate integrated representations for visualization from count-level data for downstream testing

BBKNN

BBKNN modifies the neighborhood graph so that each cell draws neighbors from each batch separately before graph construction. It is attractive because it is fast and fits naturally into Scanpy workflows.

Strengths

- very fast

- lightweight

- good for exploratory integrated embeddings in Python workflows

Limitations

- graph-level solution rather than a general latent modeling framework

- less flexible than deep generative approaches for complex nonlinear effects

- sensitive to neighborhood settings and batch composition

scVI and scANVI

Deep generative models from scvi-tools are now among the most important integration approaches in single-cell analysis. scVI learns a latent representation that models count structure and batch, while scANVI adds label information in semi-supervised settings.

Strengths

- handles complex, nonlinear batch structure well

- scales effectively to large integrated studies

- strong choice for atlas building, reference mapping, and multimodal extensions in the scverse ecosystem

Limitations

- requires more technical familiarity than conventional workflows

- usually benefits from stronger computational infrastructure

- interpretation still depends on careful validation, not just a clean latent space

Nygen Analytics

Nygen Analytics provides no-code workflows for normalization, joint visualization, clustering, and batch-aware analysis on top of Scarf-based infrastructure. The main practical value is not that it replaces methodological judgment. It is that it removes routine coding overhead for teams that need to explore integrated single-cell datasets, compare conditions, and generate shareable outputs without rebuilding every step from scratch.

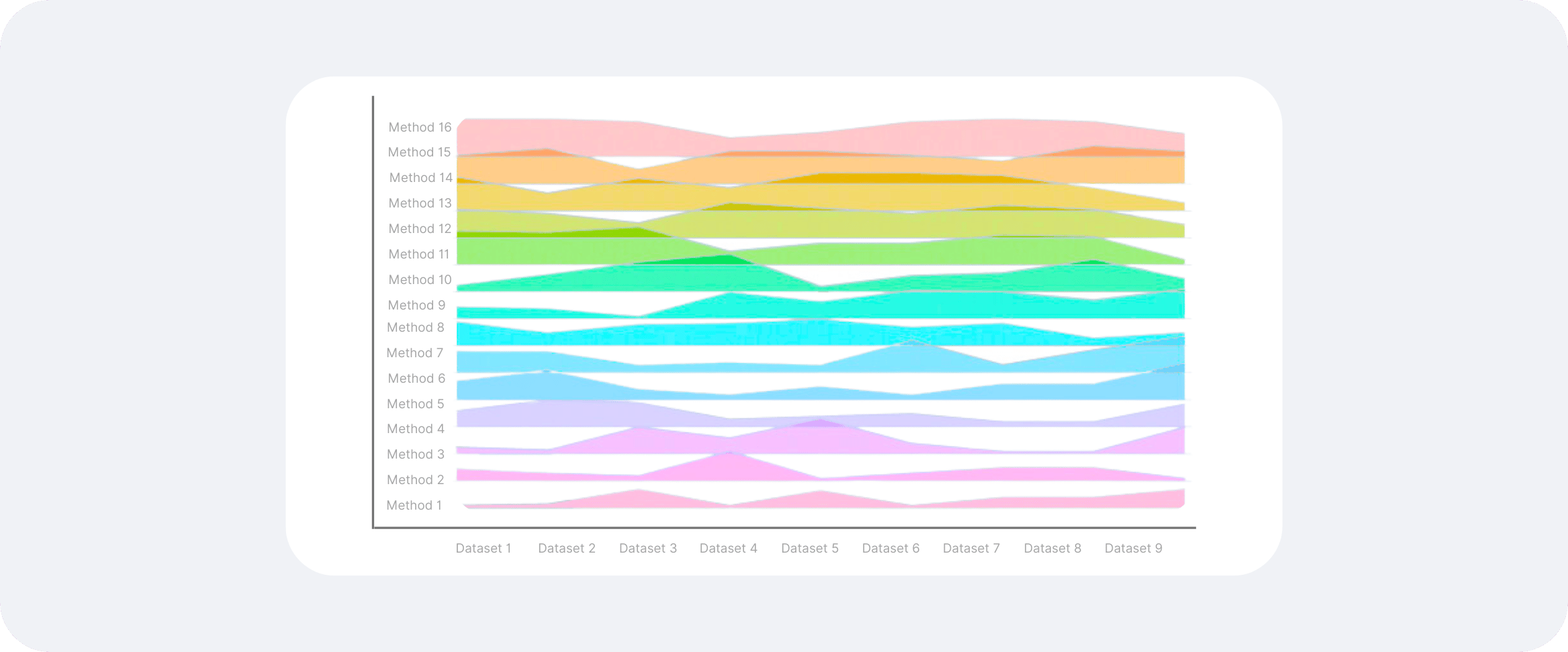

How to evaluate whether integration worked

A clean UMAP is not evidence. Good batch correction should improve technical mixing without destroying known biology.

That means evaluation should combine quantitative metrics and biological checks.

Quantitative checks

Common metrics include:

- kBET, which tests whether local neighborhoods have the expected batch composition

- LISI, which can be used both for batch mixing and cell-type separation

- graph connectivity or related neighborhood preservation metrics

Metrics are useful, but they are not sufficient in isolation. A method can score well on batch mixing by overmerging cell states.

Biological checks

These matter just as much:

- do known marker-defined populations remain separable

- do expected rare populations survive integration

- do condition-specific states remain detectable where biologically expected

- do pseudobulk or donor-level comparisons still agree with the integrated view

- are known trajectories still coherent after correction

In real projects, the best validation is often a small panel of expected markers, sample-level sanity checks, and agreement with orthogonal knowledge about the tissue.

The main failure mode is overcorrection

Overcorrection happens when integration removes true biological variation because it resembles a batch-associated signal. This is common when condition, donor, and cell state are entangled.

A few examples:

- tumor-specific malignant states get forced toward normal reference populations

- activated immune states present only in one condition are pulled into a resting compartment

- developmental timepoint structure is flattened because the model treats time as nuisance variation

This is why many experienced analysts integrate for visualization and mapping, but return to uncorrected counts, pseudobulk summaries, or batch-aware statistical models for expression testing.

A practical decision framework

If you are analyzing scRNA-seq data today, a sensible decision tree looks like this:

Use simple normalization first when

- you are working within one dataset or one chemistry run

- donor effects are modest

- the main goal is clustering, marker discovery, and initial interpretation

Use SCTransform or scran when

- library size and composition differences are clearly affecting structure

- you need stronger variance stabilization before PCA and clustering

- you want a more robust preprocessing layer before integration

Use integration or batch correction when

- the same biology appears separated by donor, batch, or site

- you need a joint atlas or reference-aligned embedding

- label transfer, comparison across studies, or multi-donor visualization is central to the project

Avoid aggressive integration when

- condition is the biology

- rare or transitional states are the signal of interest

- you are preparing formal expression comparisons and can model batch directly instead

Preparing data for downstream analysis

Preprocessing choices affect almost every downstream result in scRNA-seq.

Clustering

Neighborhood graph construction and clustering are highly sensitive to normalization and integration choices. Poor preprocessing can create artificial clusters or hide real ones.

Differential expression

Differential expression is especially vulnerable to misuse of corrected matrices. In many cases, a better strategy is to define groups using an integrated embedding, then perform testing on uncorrected or pseudobulked data while modeling donor or batch explicitly.

Trajectory inference

Trajectory methods rely on local geometry. If batch structure dominates the graph, trajectories are misleading. If integration is too aggressive, intermediate states can disappear.

Annotation and reference mapping

Cell type annotation improves when the latent space is biologically coherent, but the same warning applies: a well-mixed embedding is useful only if it still respects tissue-specific and condition-specific states.

What matters most in 2026

For modern scRNA-seq analysis, the key shift is not that one method has won. It is that analysts now treat normalization, integration, visualization, and statistical testing as separate layers with different objectives.

That is a healthier way to work. Normalize to stabilize cell-level technical variation. Integrate only when the scientific question requires cross-batch alignment. Evaluate integration with both metrics and marker biology. Then perform downstream inference in a representation appropriate for the task, rather than assuming one corrected matrix should serve every purpose.

Conclusion

Batch effect correction and normalization are both necessary concepts in scRNA-seq, but they solve different problems. Normalization makes cells comparable within a dataset. Integration aligns shared populations across batches, donors, or studies. Conflating the two leads to poor preprocessing decisions and, eventually, poor biological interpretation.

The practical goal is not maximal mixing. It is to preserve the cellular programs that matter while reducing technical structure enough to make comparison possible. In a well-run single-cell workflow, batch correction is a targeted analytical decision, not a default reflex.

For teams that want to work through these steps without maintaining a full custom coding stack, Nygen Analytics provides a no-code environment for single-cell preprocessing, integrated exploration, annotation, and figure generation, while still keeping the biological interpretation front and center.